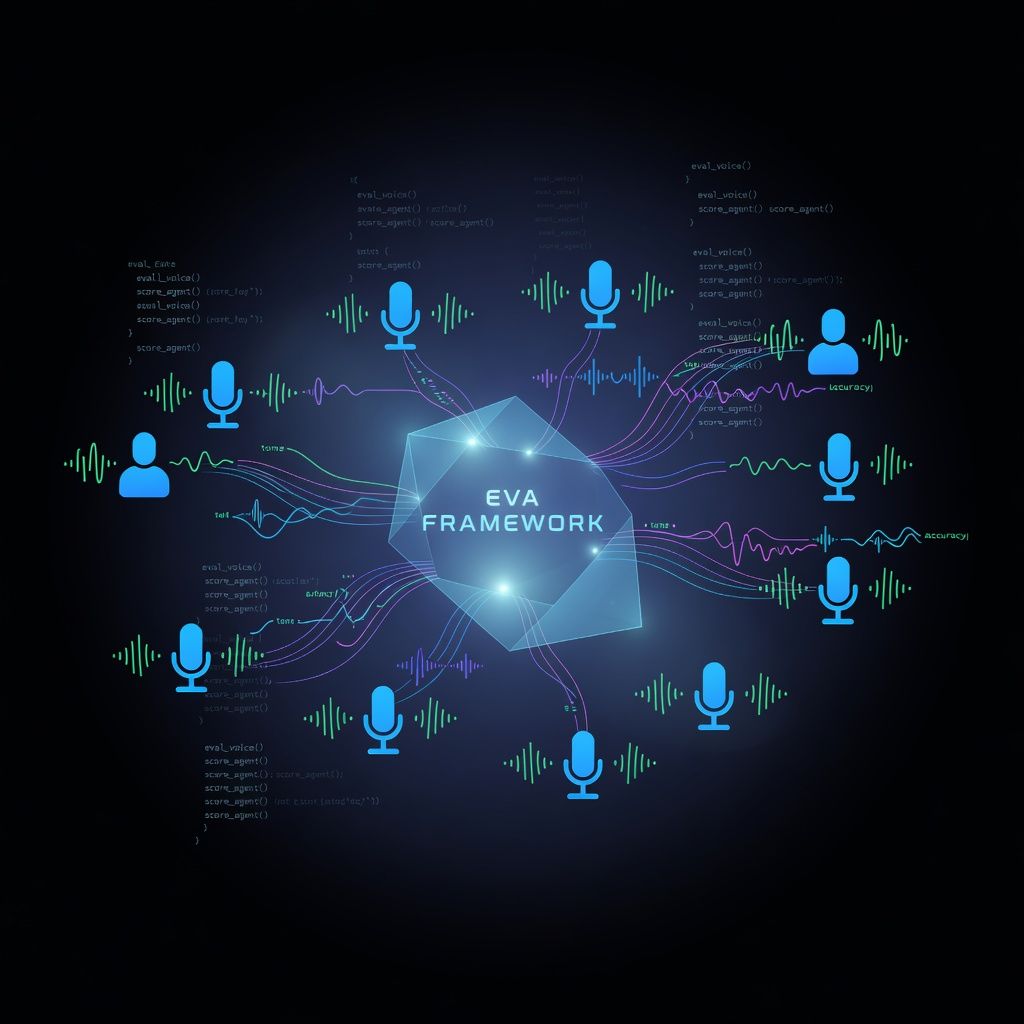

Hugging Face Introduces EVA: A New Framework for Evaluating Voice Agents

Hugging Face has launched EVA, an open-source framework designed to rigorously evaluate the performance of voice AI agents. This tool addresses the critical need for standardized metrics in assessing voice interaction quality, reliability, and safety.

Hugging Face has officially released EVA (Evaluation for Voice Agents), a new open-source framework aimed at standardizing how developers and researchers assess voice AI systems. The release provides a comprehensive suite of benchmarks and metrics specifically tailored for voice interactions, moving beyond the text-centric evaluation methods that have dominated the field. By offering a unified platform, EVA allows teams to measure key performance indicators such as latency, accuracy in noisy environments, and the naturalness of conversational flow.

The significance of EVA lies in its ability to fill a major gap in the current AI landscape. As voice agents become more prevalent in customer service, healthcare, and personal assistants, the lack of standardized evaluation has led to inconsistent performance and unpredictable user experiences. Unlike general text benchmarks, EVA accounts for the unique challenges of speech, including disfluencies, background noise handling, and context retention over long dialogues. This ensures that voice models are tested under conditions that closely mirror real-world usage, providing a more honest assessment of their capabilities.

Looking ahead, the open-source nature of EVA invites the broader community to contribute new benchmarks and edge cases, fostering a collaborative approach to improving voice AI. Early reactions suggest that this framework will become a critical tool for developers integrating voice capabilities into their products, ensuring higher reliability before deployment. As the technology evolves, EVA is poised to set the industry standard for what constitutes a truly competent and safe voice agent.