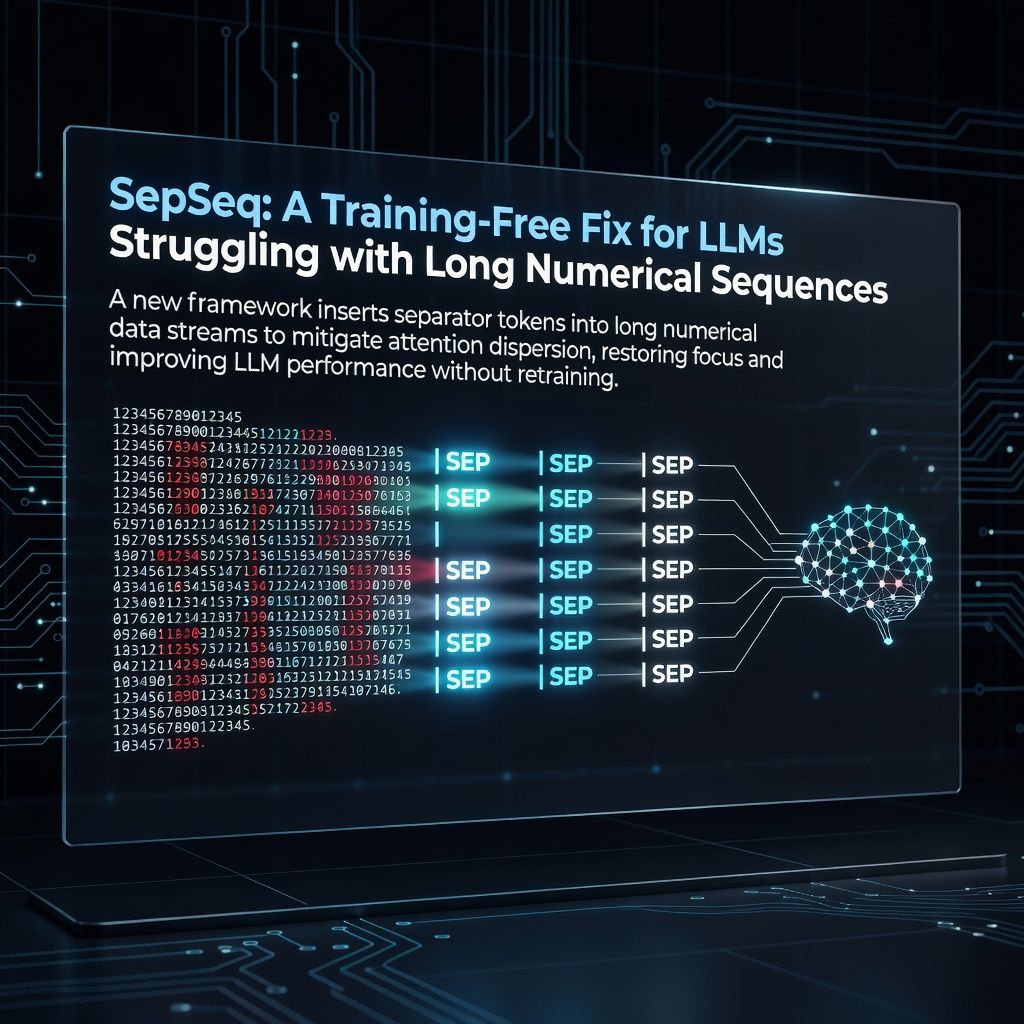

SepSeq: A Training-Free Fix for LLMs Struggling with Long Numerical Sequences

Researchers introduce SepSeq, a training-free framework that inserts separator tokens to solve attention dispersion in LLMs processing long numerical data. This plug-and-play method significantly boosts performance without requiring model retraining or architectural changes.

Researchers have unveiled SepSeq, a novel training-free framework designed to address the critical failure of Large Language Models when processing long numerical sequences. Despite the theoretical capacity for massive context windows, current transformer-based models suffer from severe performance degradation due to attention dispersion within the Softmax mechanism. This dispersion prevents the model from concentrating its focus on relevant data points, leading to inaccurate outputs. The proposed solution involves strategically inserting separator tokens into the input sequence, which act as anchors to mitigate this dispersion and restore the model's ability to process long numerical data effectively.

The significance of this development lies in its simplicity and immediate applicability. Unlike previous approaches that required extensive fine-tuning or architectural overhauls, SepSeq is a plug-and-play solution that can be integrated into existing models without any retraining. By mechanically demonstrating that separator tokens can realign attention mechanisms, this research offers a practical pathway to enhance LLMs in domains like financial forecasting, scientific data analysis, and time-series prediction where long numerical precision is paramount. This approach effectively bypasses the computational costs associated with training new models while solving a fundamental limitation in current architectures.

Looking ahead, the introduction of SepSeq invites immediate adoption by the research community and industry practitioners dealing with long-context numerical tasks. The open-source nature of the framework, as indicated by its release on arXiv, suggests rapid iteration and potential integration into major model pipelines. However, open questions remain regarding its scalability to even longer sequences and its performance across different types of non-numerical long-context tasks. As the community tests this method, it will likely spark further investigations into how simple token-level interventions can unlock the full potential of transformer-based architectures.