New Framework Tackles LLM Inconsistency in Sentiment Analysis

Researchers introduce SSAS, a framework to improve consistency in LLM-based sentiment predictions. The method addresses the volatility of current models, which hampers enterprise decision-making.

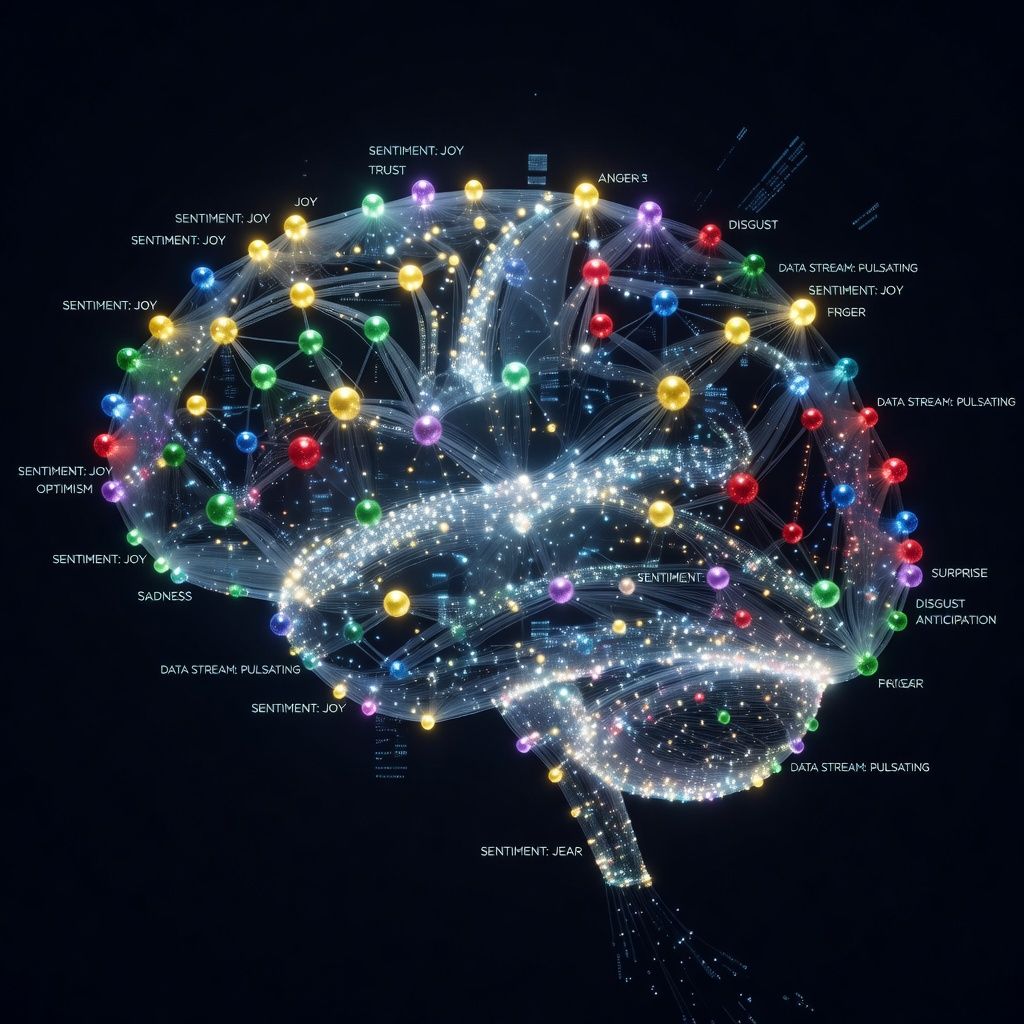

Researchers have developed a new framework called Syntactic & Semantic Context Assessment Summarization (SSAS) to enhance the consistency of sentiment predictions made by Large Language Models (LLMs). The study, published on arXiv, highlights the inherent stochasticity of LLMs, which makes their outputs unreliable for strategic business decisions. The chaotic nature of modern datasets exacerbates this issue, leading to volatile sentiment predictions.

The SSAS framework aims to mitigate these challenges by providing a structured approach to assessing and summarizing syntactic and semantic contexts. This method could significantly improve the reliability of LLM-based analytics, making them more suitable for enterprise applications. The framework is particularly relevant in industries where consistent sentiment analysis is crucial for decision-making, such as finance, marketing, and customer service.

The research team plans to further refine the SSAS framework through extensive testing and collaboration with industry partners. Future developments may include integrating the framework with other AI models to enhance its accuracy and robustness. The long-term goal is to establish SSAS as a standard tool for consistent sentiment analysis, addressing the current limitations of LLMs in enterprise settings.