New Research Aims to Make AI Agents More Secure and Auditable

Researchers propose a unified graph representation to track AI agents' decisions, making it easier to audit their actions. This could help ensure AI systems behave as intended and follow security protocols.

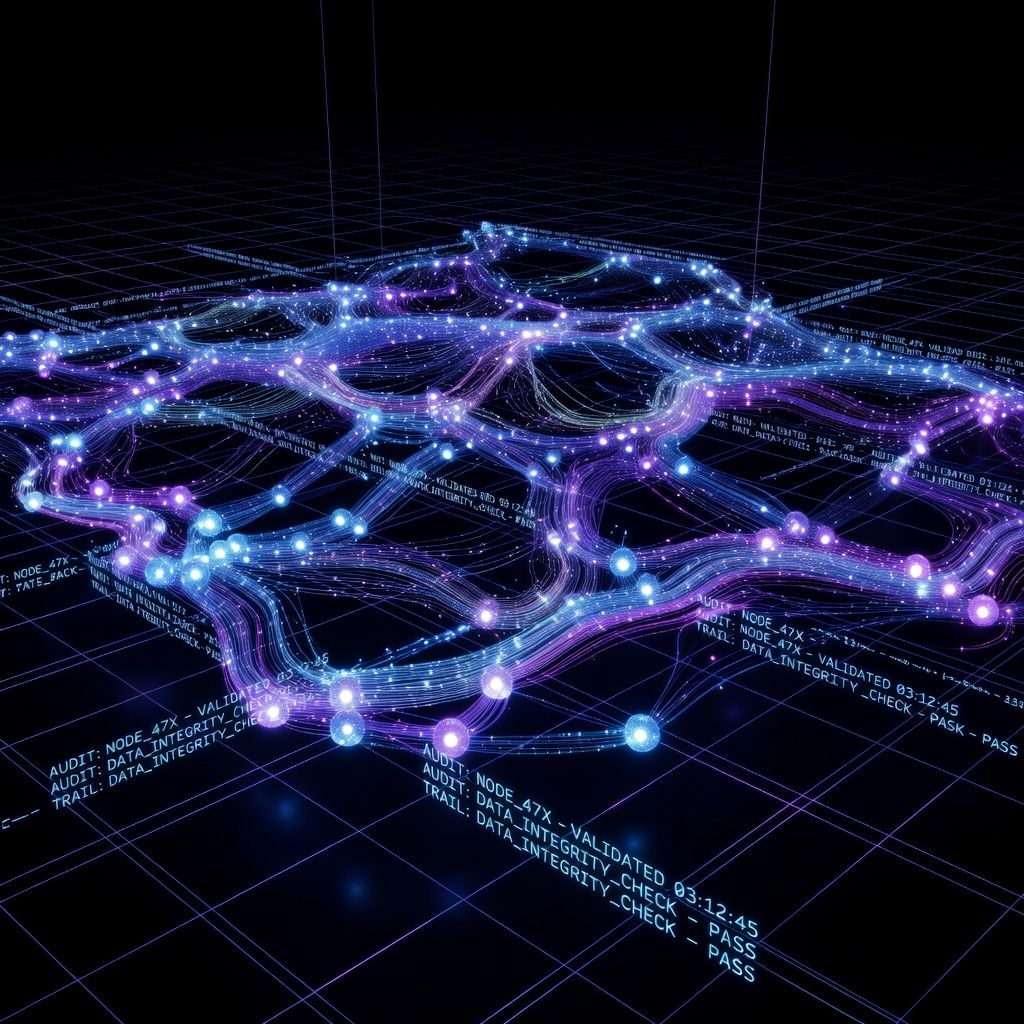

Researchers have introduced a new method to improve the security and auditing of AI agents. These agents, which perform complex tasks autonomously, often lack transparency in how they make decisions. The proposed solution uses a unified graph representation to track the agents' cognitive states and actions, providing a clearer picture of their behavior.

This development matters because it addresses a growing concern in AI: ensuring that autonomous systems act safely and securely. Imagine an AI assistant managing your schedule—you'd want to know it's making decisions based on accurate information and following security protocols. This new approach could help auditors and developers verify that AI agents are behaving as expected.

If you're curious about how this might affect you, keep an eye on AI tools that emphasize security and transparency. As this research progresses, we may see more AI systems that not only perform tasks but also provide detailed logs of their decision-making processes. This could lead to more trust in AI agents across various applications, from personal assistants to business tools.